Published

Building Something with Local LLMs

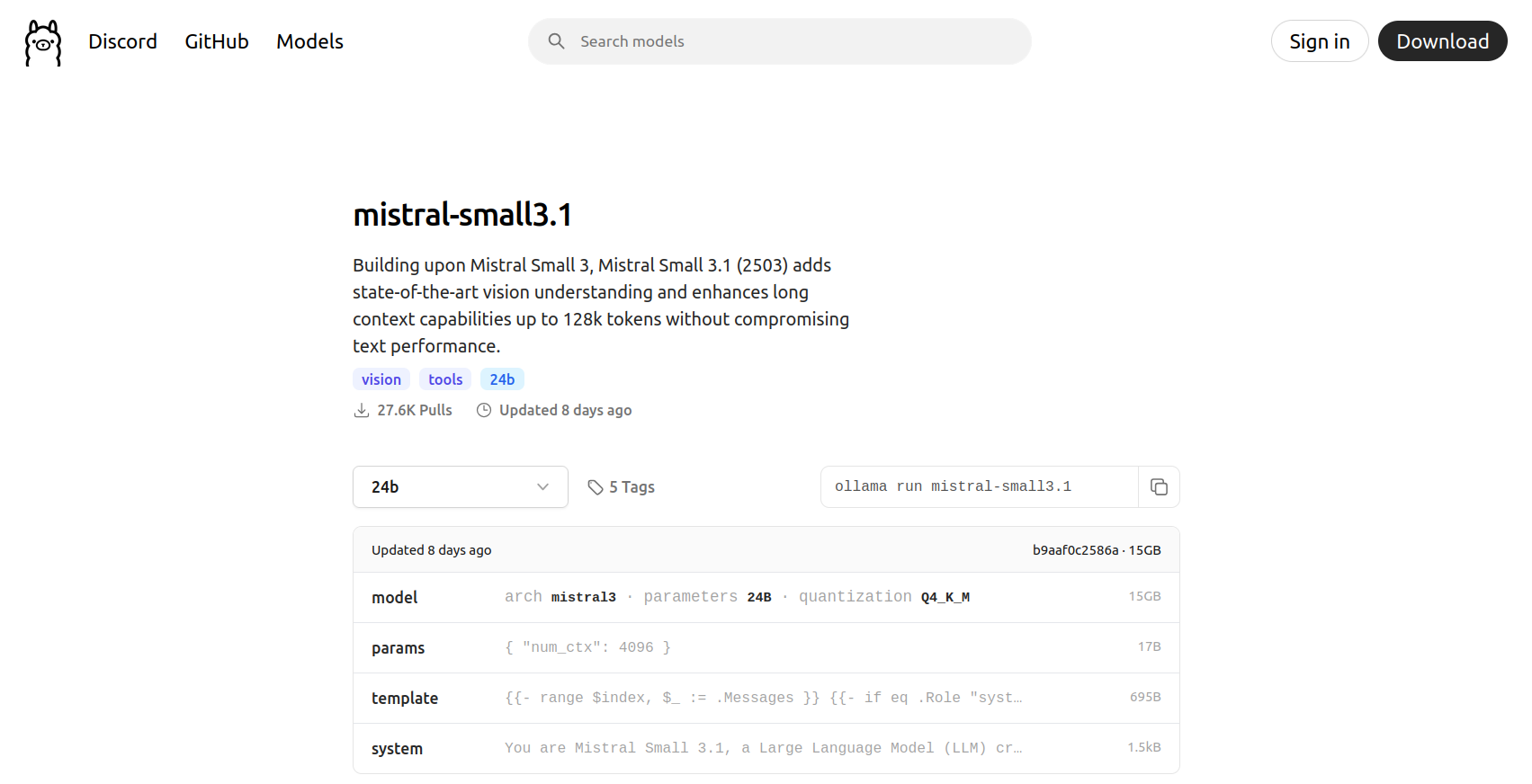

Create a local application that leverages the Ollama server API to do something interesting.

This blog post belongs to a two-part series: Ollama Local LLMs

- Running LLMs Locally with Ollama

- Building Something with Local LLMs (this page)

Is there any value in running small LLMs locally when all the big tech companies offer free credits for truly large LLMs? Will my graphics card explode under my desk?

Building Something with Local LLMs

TODO