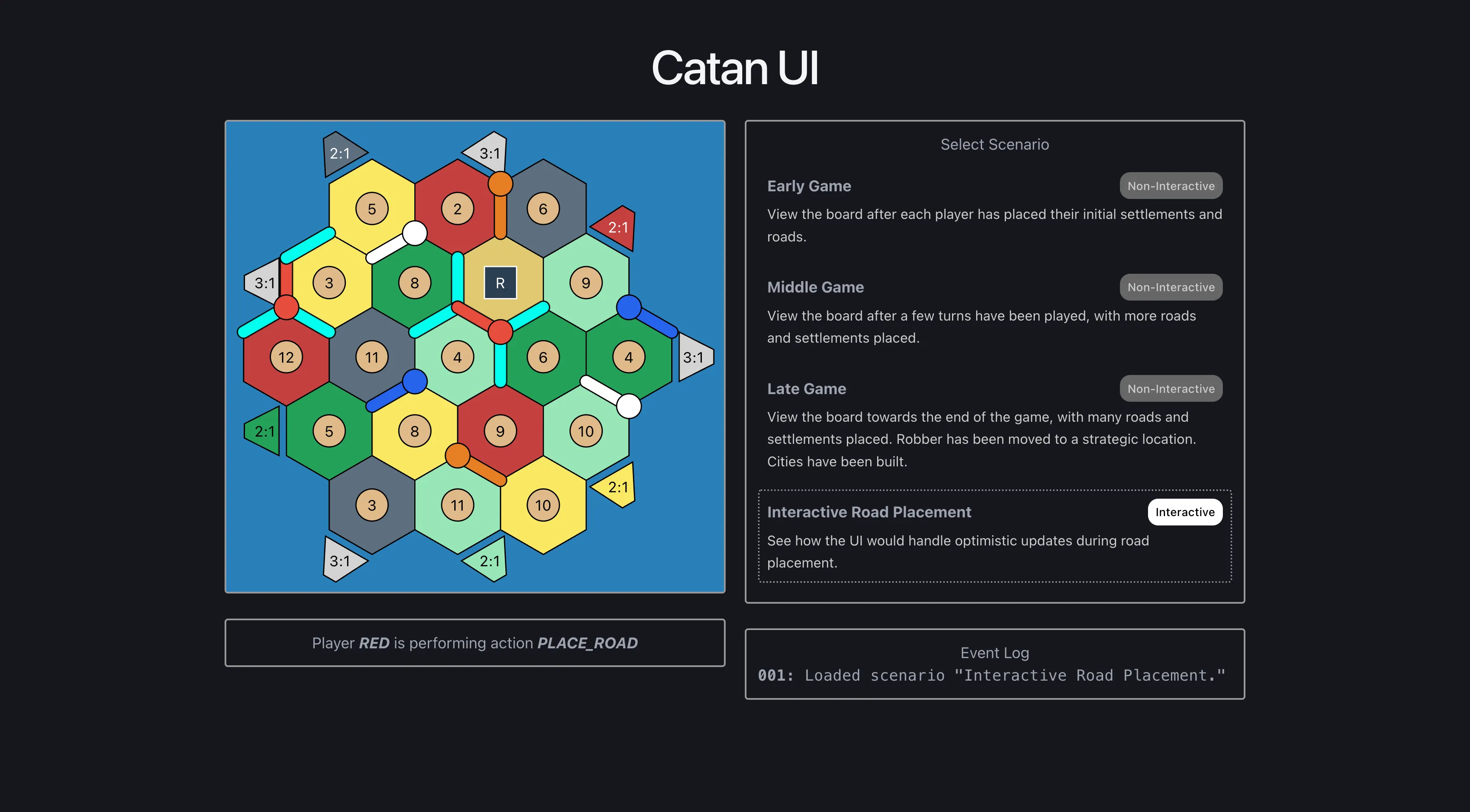

How the (q, r) coordinate system and linear transformations are used to place tiles, roads, and settlements on a responsive SVG Catan board.

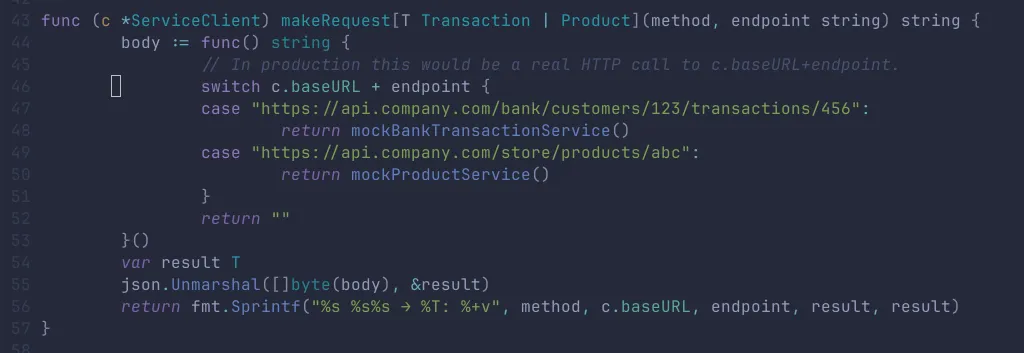

Is there a good reason Go doesn't support type parameters in method signatures? How does this impact the way we write application code?

May 2026

-

How separating external game state from internal render state makes it easy to optimistically place a road and then roll back if the server rejects it.

CatanReactHTTPTypeScriptWeb DesignDistributed Systems -

How the (q, r) coordinate system and linear transformations are used to place tiles, roads, and settlements on a responsive SVG Catan board.

CatanSVGReactTypeScriptLinear Algebra

April 2026

-

Is there a good reason Go doesn't support type parameters in method signatures? How does this impact the way we write application code?

GoGenericsProgramming LanguagesDesign Patterns

December 2025

-

A platform for deploying and browsing experimental web apps. Each app is either a container or a set of static files.

HTMLCSSJavaScriptReactFlaskPython

June 2025

-

Using Ollama models within LM Studio's GUI

AILLMOllamaLinuxLM Studio

April 2025

-

Integrate Ollama with GitHub Copilot for AI-powered code completion using local LLMs.

AILLMOllamaLinuxCopilot -

Testing which LLMs my NVIDIA GeForce RTX 4060 Ti can run locally through Ollama.

AILLMOllamaLinux