Published

Running LLMs Locally

Exploring the local model options available in 2026.

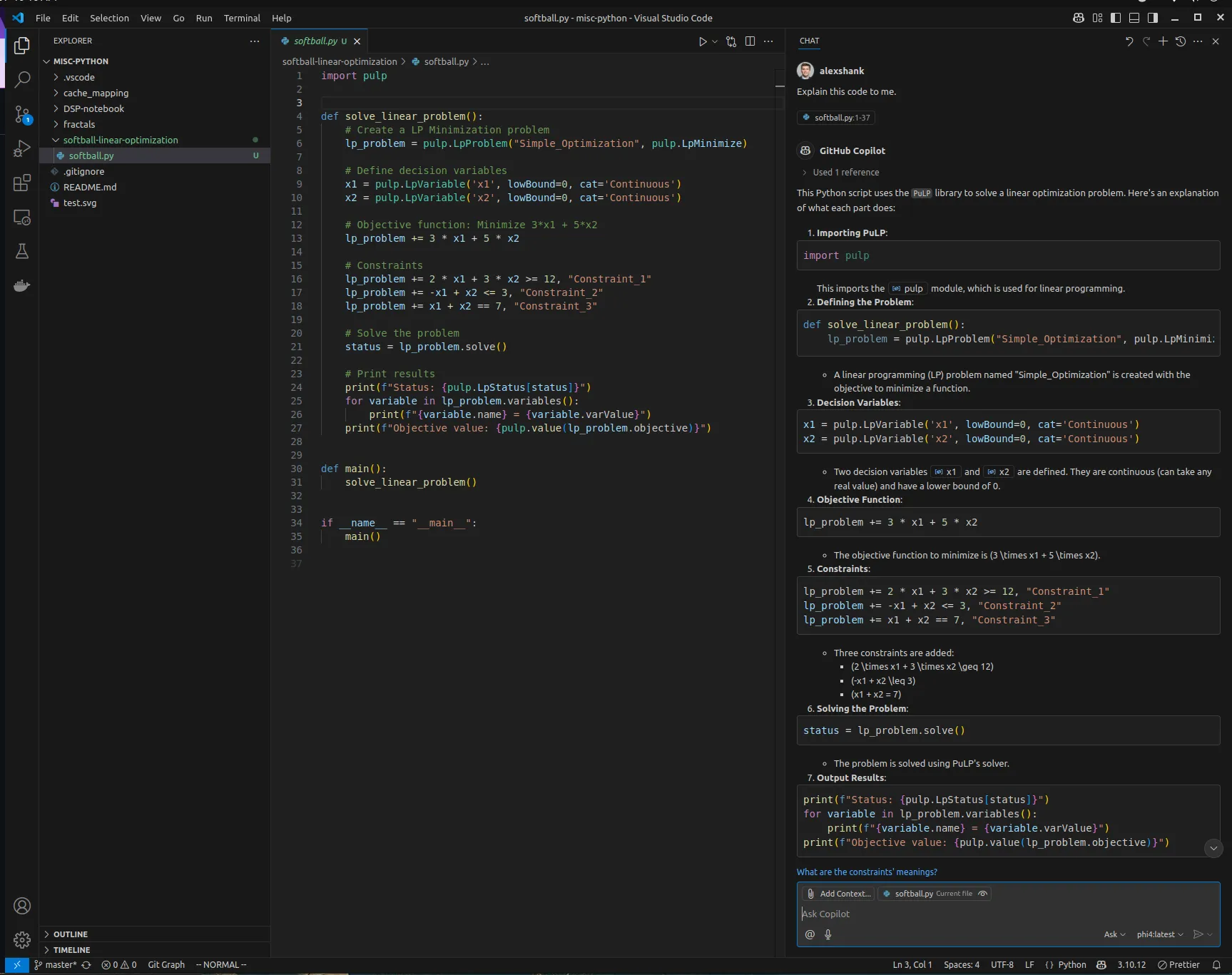

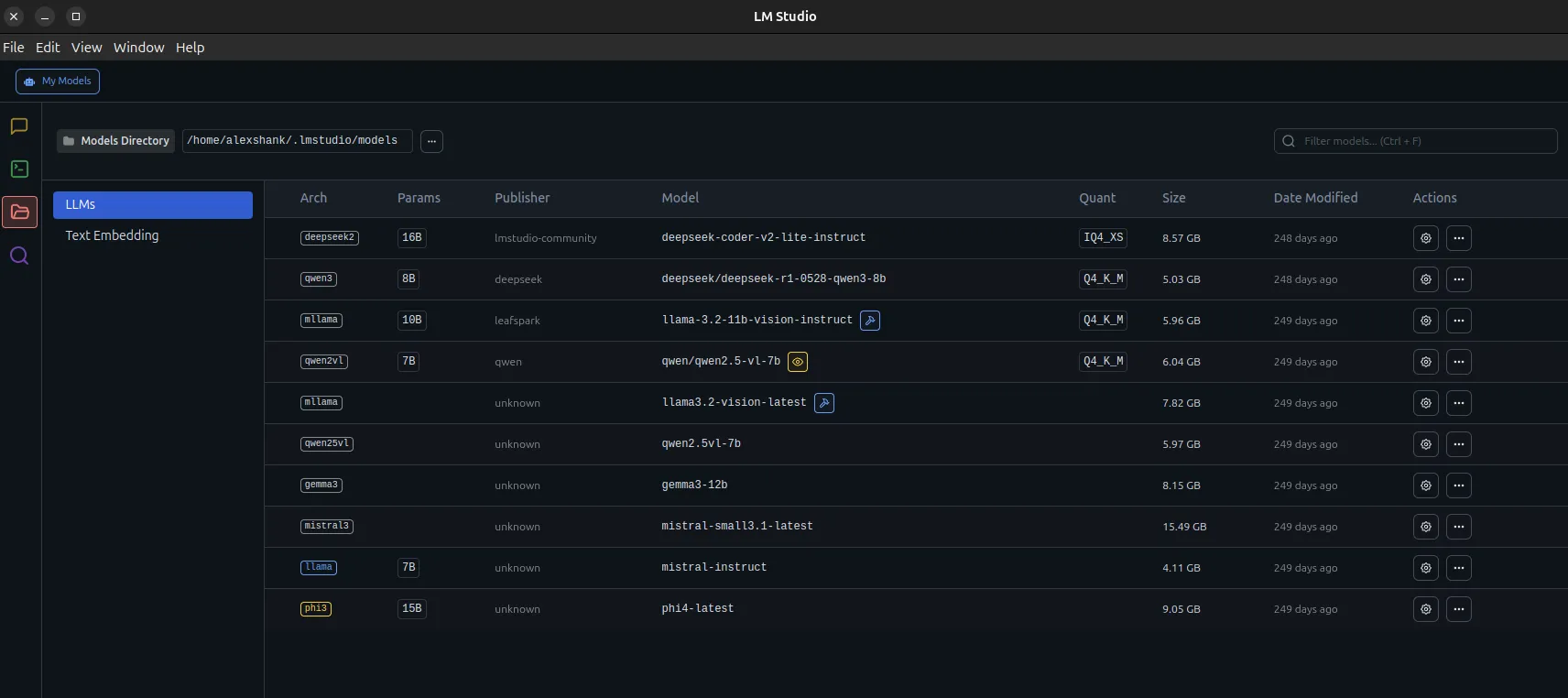

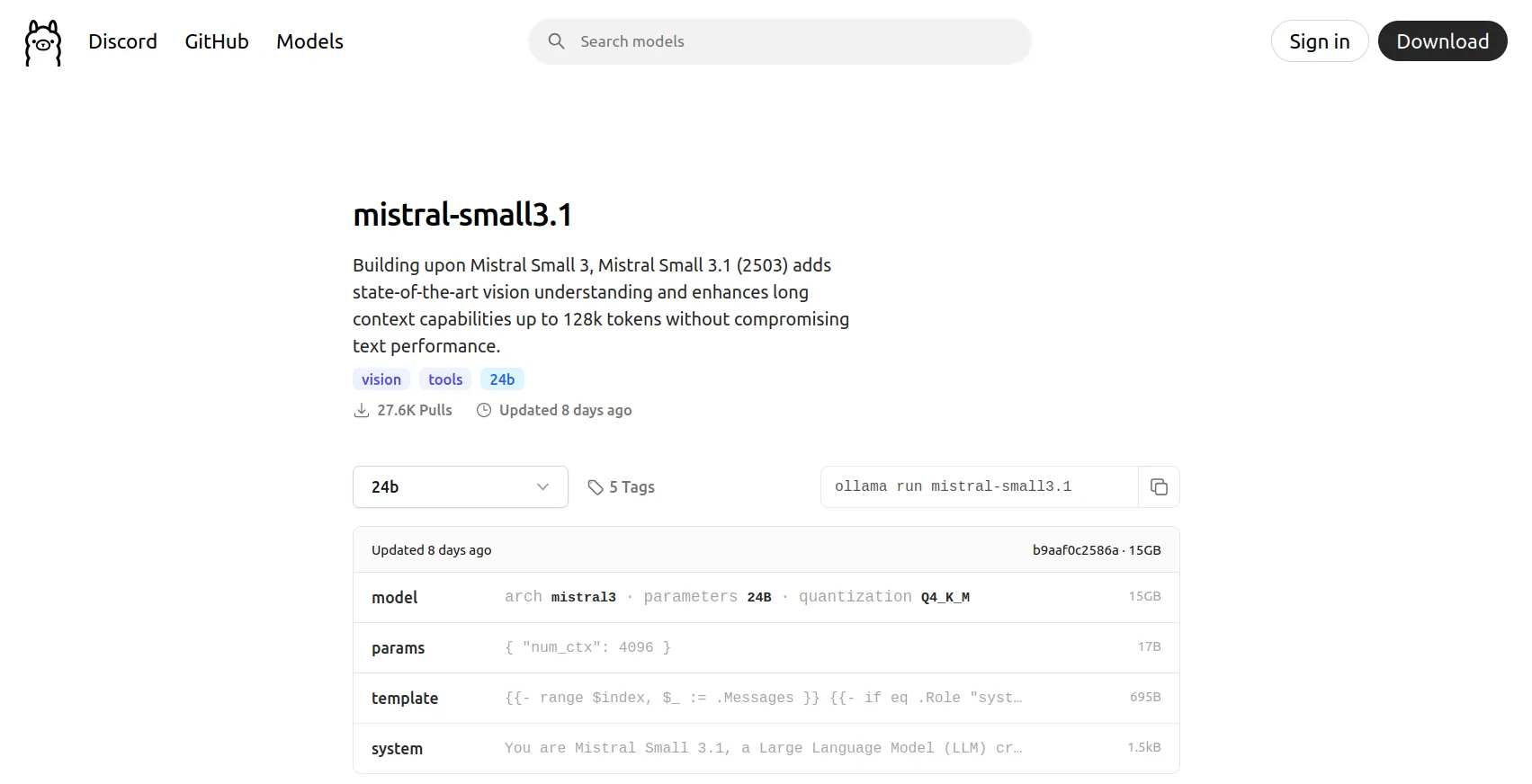

This series explores running LLMs locally. Ollama is the primary focus, along with its various tool integrations (e.g., IDEs). Many other popular systems like Whisper, Stable Diffusion, LlamaIndex, and Gemma 4 will be explored in future posts.

Posts in this Series

This is a three-part blog series.