Ollama Copilot Integration

Integrate Ollama with GitHub Copilot alternatives for AI-powered code completion using local LLMs.

This blog post belongs to a three-part series: Running LLMs Locally

- Running LLMs Locally with Ollama

- Ollama Copilot Integration (this page)

- Using LM Studio's Chat Interface

Now that we have an Ollama model running locally, let’s see if we can use it with GitHub Copilot. Copilot was the first tool I used for AI-assisted coding, so it will be interesting to see how it performs with a local model.

I would not recommend local models for development work at this time. The models from Anthropic and other providers are significantly larger than local models, still quite fast, and tailored to their tooling (e.g., Claude Code). In a decade or so, I could imagine local models becoming more prominent. Especially once (1) model providers have established their moats and start turning the screws on pricing, (2) the coding tools themselves can be effective without enormous models, or (3) model architecture breakthroughs make future small models as effective as today’s large models.

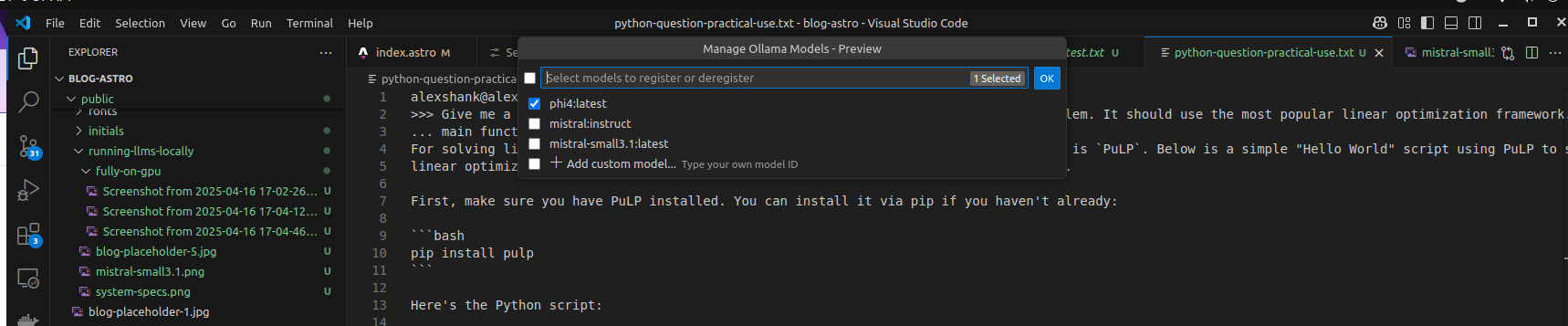

Configuring Copilot to Use Phi-4

I followed the instructions from these GitHub docs, but note that local model integration now seems to be deprecated.

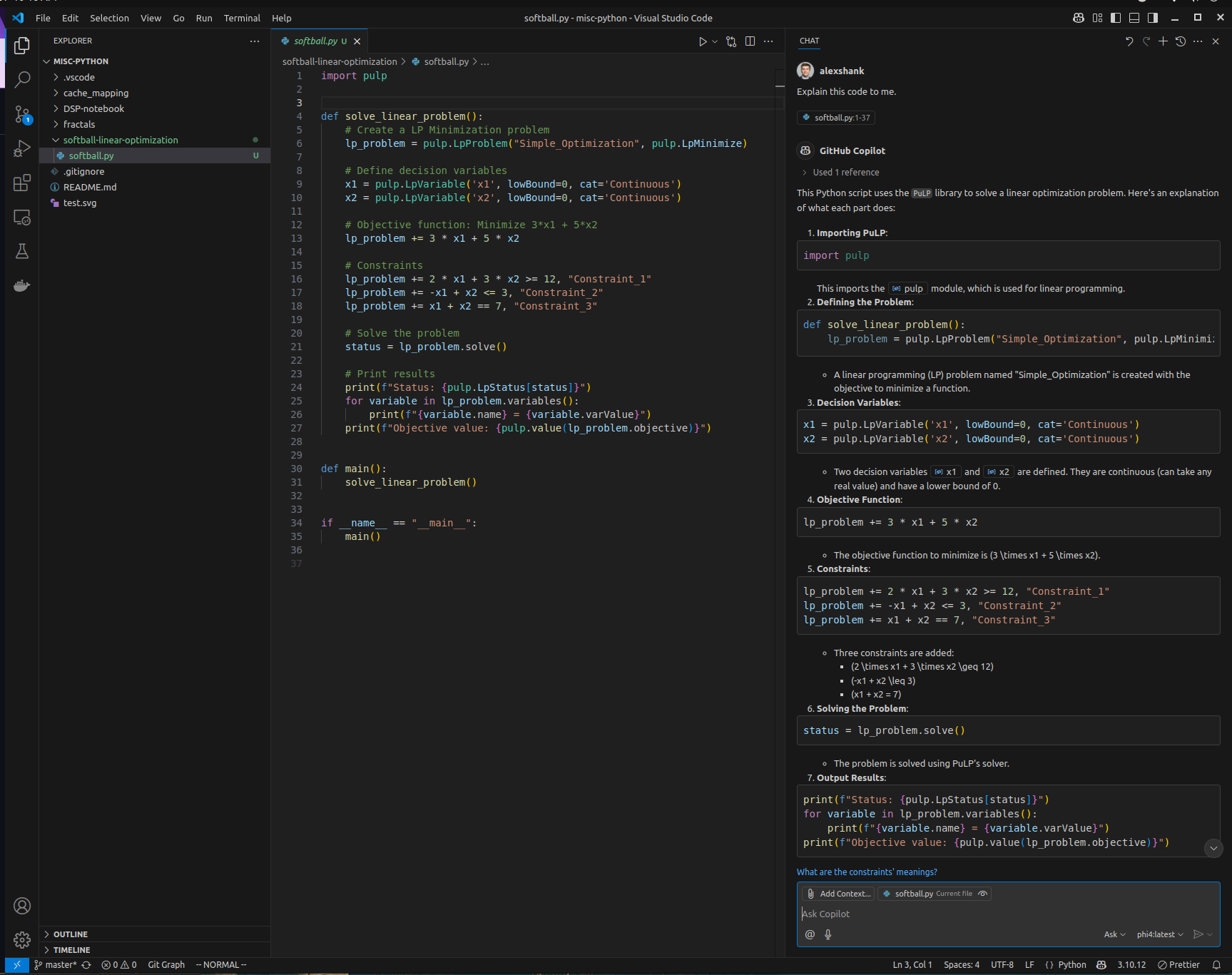

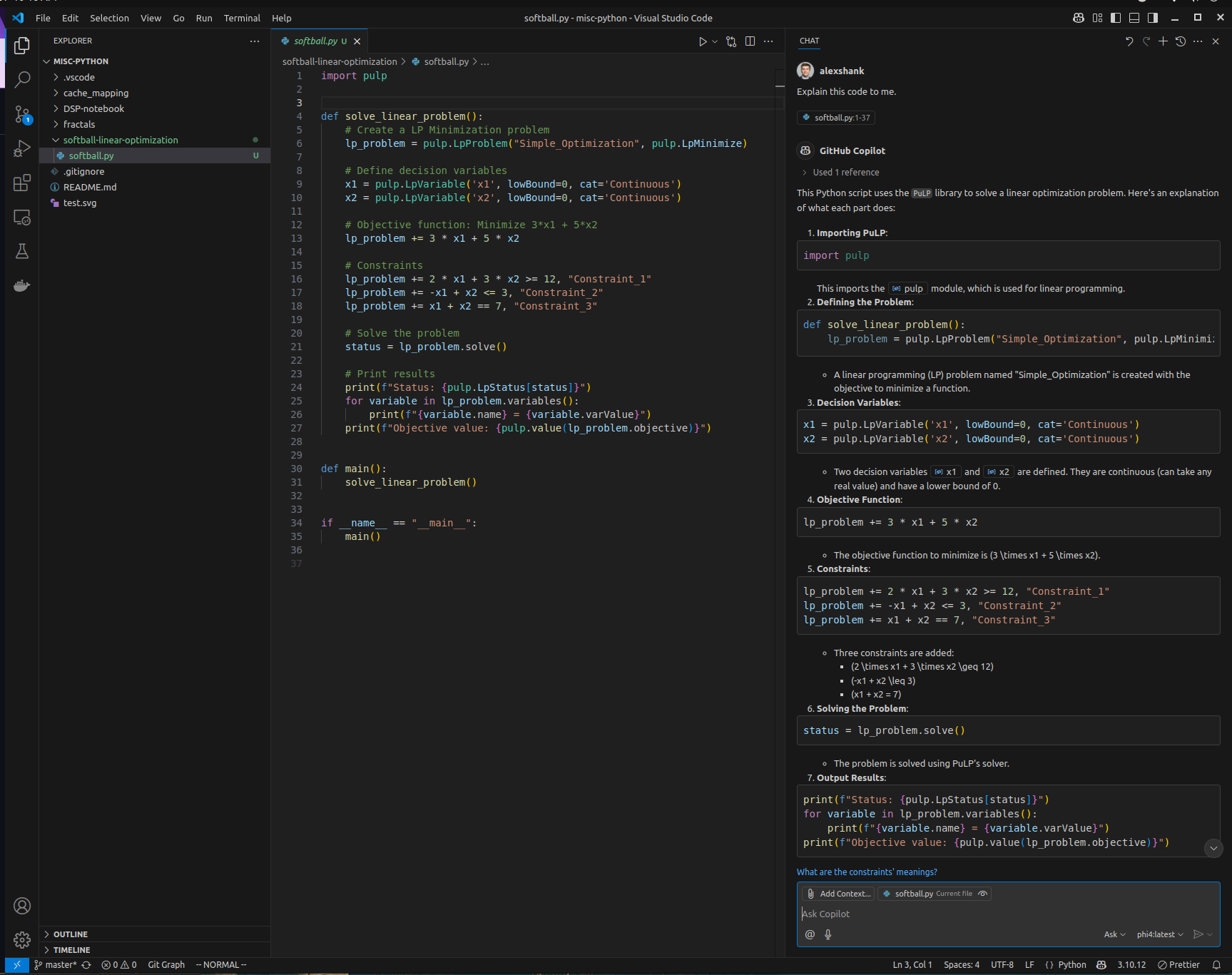

Have Phi-4 Analyze Code

Even the small local model can analyze a simple Python file and provide some insights. Again, this isn’t going to be as good as the large models, but it’s private, fast, and free to use.

In my next post, we’ll continue looking at the phi4-latest model and see if we can get a local chat interface working with it.